SteelConnect EX SD-WAN Enterprise Data Center Integration

Unfortunately, a blog post would not be long enough to detail all the possible options and anyway, it would be foolish of me trying to address this topic in an exhaustive manner: there are as many data centers as there are enterprise customers…As a result, I am going to focus on the main principles that an architect should follow when integrating SteelConnect-EX in their network and some good questions to ask yourself.

Data center = Head-End

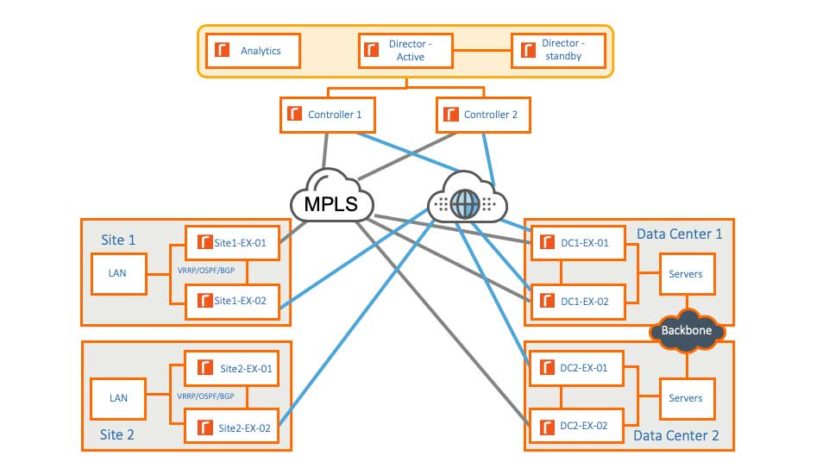

In a previous post, we reviewed the components of the solution:

- Director is the component responsible for the management plane of the SD-WAN fabric;

- Analytics is offering visibility on the network by collecting metrics and events via IPFIX and Syslog from branch gateways;

- Controller is in charge of the control plane for the SD-WAN Fabric;

- Branch Gateways – also known as SteelConnect EX appliances

The Director, analytics and controller form what we call the Head-End. Although they can be hosted in a traditional data center, they—and specifically the controller—are not part of the data plane, therefore a “branch” gateway will be required in the data center to join this particular site to the SD-WAN fabric.

Starter or dessert, that is the question

In any case, the first brick to deploy should always be the Head-End: whether it is hosted in your data center, in the Cloud, in a dedicated site or a managed service/hosted service.

Then, shall we start the rollout of the SD-WAN infrastructure with the datacenter or keep it at the end? This is a question that pops up all the time and the best answer to give is: it depends…

Data centers are traditionally more complex networks so my preference is to start here then the rest of the rollout will be easier and incremental. Additionally, since the data center is terminating most of the connections from branch offices that are consuming apps, you can quickly benefit from offloading traffic from MPLS to Internet uplink and leverage path resiliency features (FEC, Packet Racing, load balancing…) along with Application SLAs to enhance the user experience. Furthermore, as we deploy SD-WAN gateways in remote sites, we can track the performance of the data center appliances and validate initial assumptions made for the sizing.

Nevertheless, there are cases where it can make sense to conclude the rollout with the data center. It really depends on your drivers for adopting SD-WAN, your constraints (say a network freeze for a given period in the data center) and how you will be able to get immediate value. For example, should Direct Internet Breakout be a requirement for you to offload your MPLS and enhance the performance for SaaS or Cloud based applications, deploying gateways in the remote sites first will certainly deliver value. There is no need for the data center to be ready in that case. Another example could be routers’ end of life. Should you need to replace your routers in the branches, a SteelConnect EX appliance can be installed as a plain router first. SD-WAN features can be enabled at a later stage.

There are no good or bad answers here. Review your drivers for adopting SD-WAN and plan accordingly.

The golden rules

Deploying SteelConnect EX in your data center should be hassle free as long as you follow these few rules:

- It is a router! As long as you are using standard routing protocols like BGP and OSPF, you can deploy the gateway the way you want. As opposed to most of the other solutions on the market, with SteelConnect EX you will benefit from all the bells and whistles of the routing protocols so you have full control and a lot of flexibility.

- The controller must be on the WAN side of the gateway. Should you deploy the Head-End in the data center, you need to make sure that the only way for the appliance to form overlay tunnels with the controller is from its WAN interfaces.

- The data center gateway can’t seat between the controller and remote sites gateways. This is the corollary of the previous rule. Should you deploy the Head-End in the datacenter and, for example, you replace the MPLS CE router with the SD-WAN gateway, you need to make sure that the controller has a different connection to MPLS or, if that’s not possible, the controller should only be available via the Internet.

- The data center gateway can’t get access to the control network. It is a best practice to keep the control network that interconnects the Head-End components together (see our previous post about the architecture) isolated. As a result, should you deploy the Head-End in the data center, make sure the Control network subnet does not leak into the LAN. Use firewalls, Access Lists or routing redistribution policies to avoid that behavior.

Examples and counter-examples

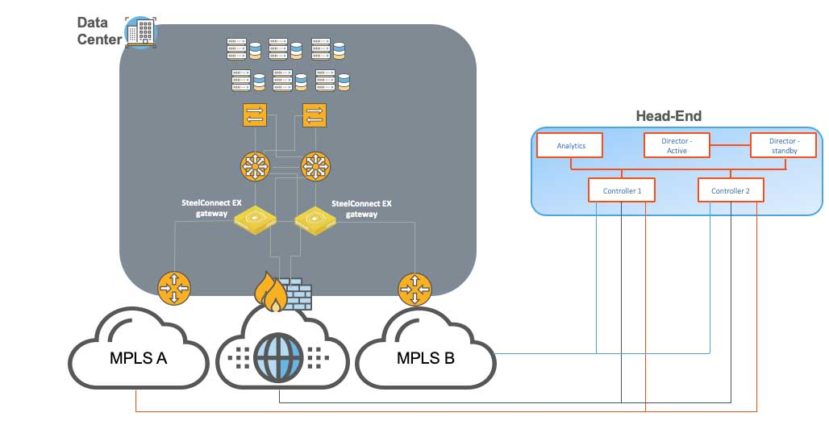

In the following example, the Head-End is hosted in a different site or Cloud hosted or a managed service. The data center appliances are inserted between the aggregation layers and the CE routers.

Note that it is not always possible to grant direct access to all WANs to the controllers – in particular for Cloud-Hosted setup. As long as there is network connectivity between all the SD-WAN gateways and the controllers, this is fine. This will be the topic of a next blog post.

We could easily replace the CE routers as well. At the moment, our appliances are only offering ethernet (copper or fiber) NICS though.

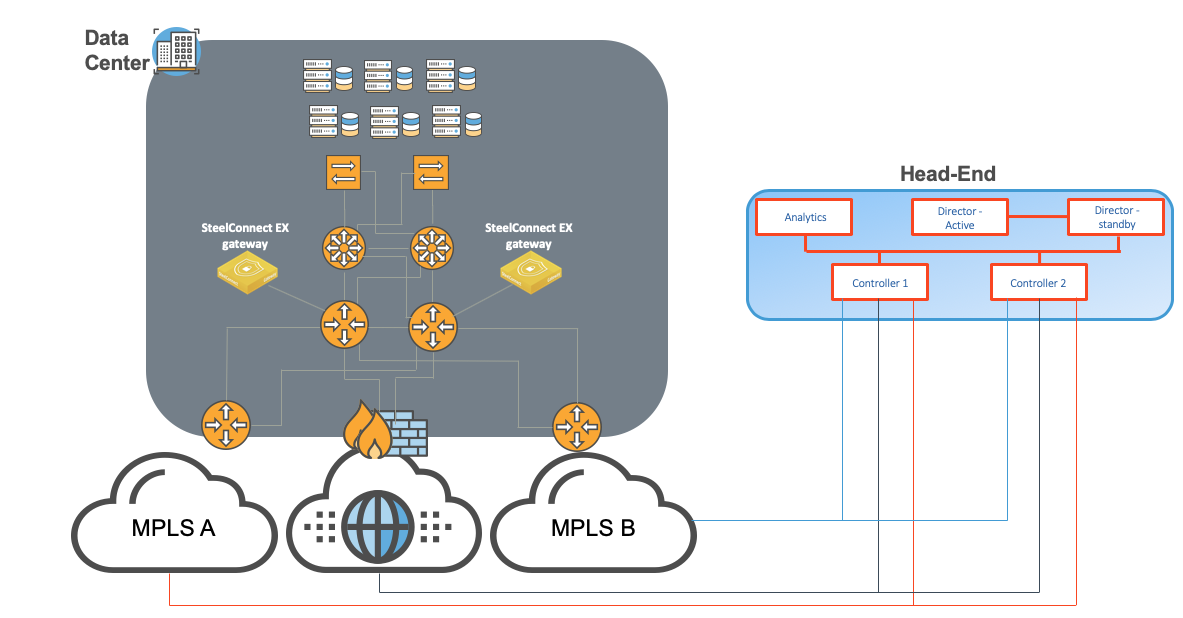

For risk-adverse organizations that want to adopt SD-WAN with minimal disruption, it is also completely fine to deploy the following architecture. Here, the data center gateways are out-of-path and rely of route attraction, conversely route retraction if one route disappears from the SD-WAN overlay network.

In the above example, there is only one connection depicted between the SteelConnect EX gateway and the WAN distribution router. In reality, we would need one per uplink (in this example, three connections: MPLS A, MPLS B and Internet) plus one for the LAN side. However, we could also rely on VLANs and have trunk connection(s) to transport LAN and WAN traffic.

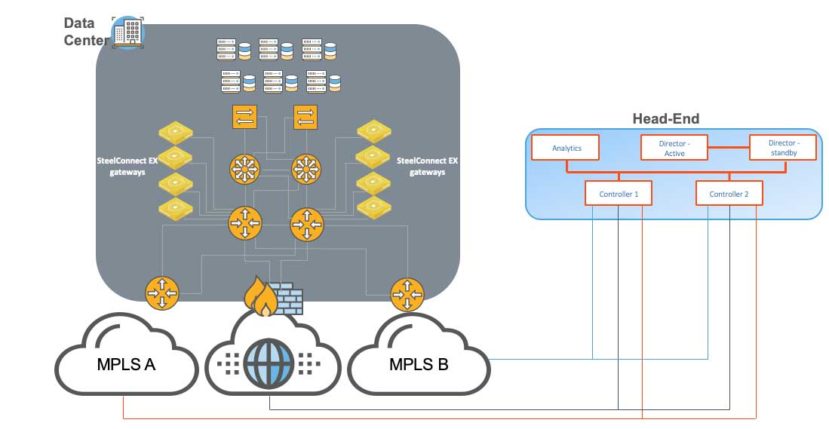

We can achieve high-scalability and high-throughput by horizontally scaling the number of gateways. This deployment is called Hub Cluster and can be seen in the following topology example.

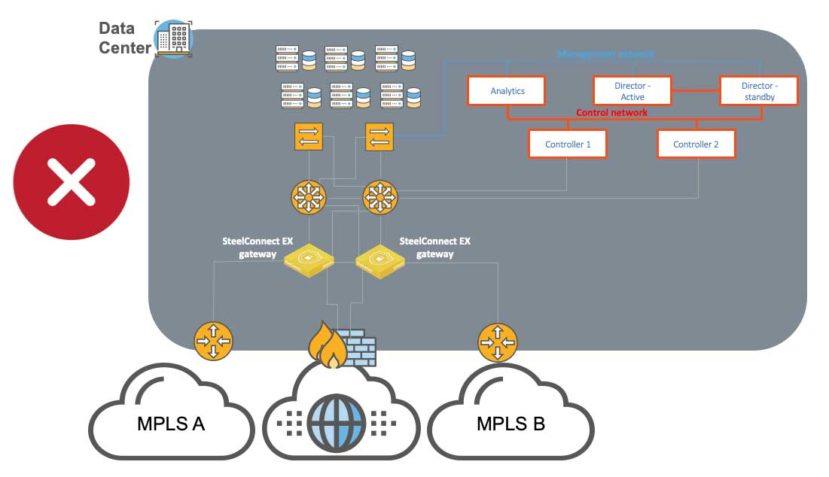

In this previous examples, the Head-End was not hosted in the data center. For organizations requiring all components to be deployed on-premises, solutions exist. Simply follow the golden rules. This following setup is not supported as the controllers seat on the LAN side of the gateways.

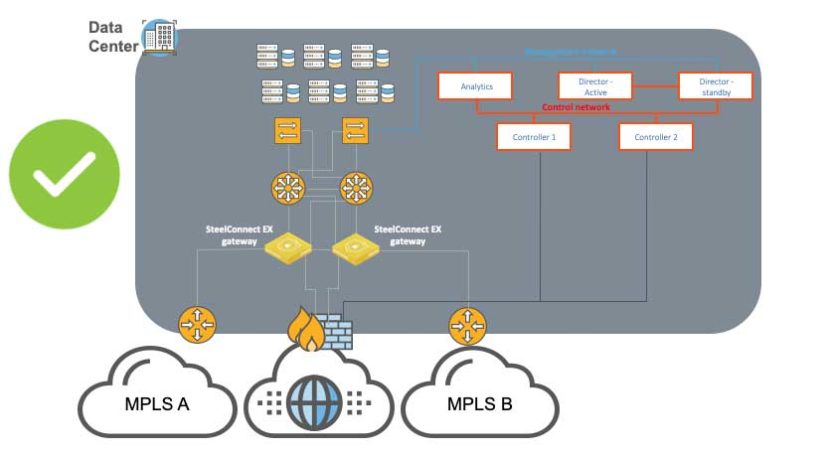

A potential solution to comply with that rule is depicted as follows:

Note that in order for the gateways to communicate with the controllers via the Internet uplink, they will need to use controllers’ public IP addresses. Indeed, when the Director pushes the configuration down to the appliance, if a public IP address is setup on the controller’s Internet uplink, this IP address will be part of the configuration, not the private IP address. Therefore, the firewall should be configured to allow that communication.

It may happen that there is no LAN interfaces left on the CE routers, in this case, you could have the controllers only connected to the Internet. However, you would need to make sure that all SD-WAN sites have network reachability to the controllers either with a direct Internet connection or an Internet Gateway within the MPLS cloud.

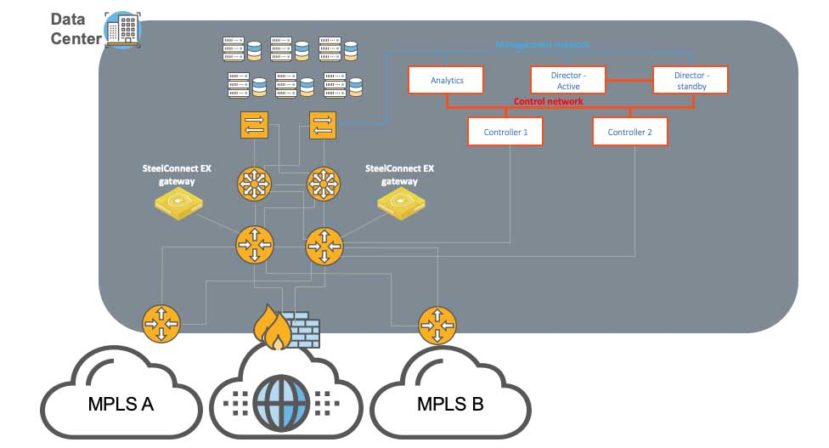

Should you keep your WAN distribution routers, having data center gateways and controllers at the same level will work too.

Checklist for a successful implementation

All data center networks are different. There are questions to ask yourself when you are approaching a design. Here is a list which does not pretend to be exhaustive:

What are our goals and drivers? The answer to that question should remain at the center of all decisions and answers to the following questions.

- How many remote sites?

- What are our throughput requirements today and in the coming months?

- What are your requirements in terms of service resiliency, SLAs?

- What are the routing protocols in use? Can we use BGP or OSPF?

- Are we replacing the CE routers with the SD-WAN gateways or not?

- Can we integrate with WAN distribution routers?

- Do we need hardware appliances or will we go virtual?

- What are the interface type and speed requirements?

- Is there a WAN optimization solution in place?

- Can we allocate public IP addresses to the controller?

- How will we deploy the controller?

- Are we using the data center as a hub or transit site?

- Are there firewalls in the network path?

What have we learned today?

A data center is just “another branch” that requires its own SD-WAN gateway appliance—even if you host the Head-End here.

Please note that in the upcoming version 20.2, it will be possible to use a gateway appliance as a controller too, it will assume both roles at the same time. However, we will always need at least one dedicated primary controller. More details to come in a further post.

The SteelConnect EX is a router. Leverage all your routing knowledge to deploy it in your data center.

A question, a remark, some concerns? Please don’t hesitate to engage us directly on Riverbed Community.

This post was first first published on Riverbed Blog’s website by Romain Jourdan. You can view it by clickinghere